Smart AI feature design starts with a sobering reality: 82% of enterprise leaders say IT complexity impedes success. In fact, poorly implemented AI can worsen this problem. Zillow lost over $500 million due to flawed AI models, while IBM’s Watson struggled with adoption despite a $5 billion investment.

AI promises to simplify, but it often adds layers of complexity instead.

In this guide, we’ll show you how to design AI features that genuinely reduce complexity. You’ll learn to assess AI value, implement the right types of design patterns, and create transparent ai feature ui design that users trust.

Assessing Whether AI Actually Adds Value

Building AI features without proper assessment leads teams down expensive paths. The pattern repeats: organizations start with “we need AI” and work backward, never stopping to ask whether AI solves an actual problem. This approach wastes resources and creates systems users don’t trust.

Avoid the AI-First Trap

The AI-first methodology sounds appealing. You prioritize artificial intelligence as the foundational element of product design, with learning systems that anticipate needs and personalize at scale. But this framework becomes dangerous when applied without discrimination.

Write a one-sentence problem statement before any technical work begins. If you cannot articulate the business problem clearly, you are not ready to build. Teams that skip this step end up with products that feel like prototypes disguised as finished work.

Instead, start with the business problem and determine if AI is the right solution. Ask yourself: could rules or heuristics solve this adequately? Does the problem require learning from data?. Small datasets under 50 interviews or a few hundred survey responses often work better with traditional analysis methods.

Evaluate AI’s Four Core Capabilities

AI excels at four specific tasks: content creation, summarization, basic data analysis, and perspective taking. These capabilities define where AI adds genuine value versus where it introduces unnecessary complexity.

For content creation and summarization tasks, assess quality using three criteria from Natural Language Generation: faithfulness, relevance, and coherence. Faithfulness checks if AI-generated information matches the source factually. Relevance determines whether the AI selected the most important content. Coherence evaluates the overall quality of generated sentences.

Data analysis tasks require different metrics. Classification work demands accuracy, precision, recall, balanced accuracy, and F1 scores. These metrics measure the overlap between machine-predicted labels and human-annotated ground truth labels. Context determines acceptable thresholds. For initial literature identification with highly imbalanced data, recall scores of 0.75 and precision of 0.60 may suffice. Tasks with higher evaluative stakes require stronger metrics.

Ask Three Key Questions Before Integration

Three questions separate valuable AI implementations from costly mistakes.

First, are you designing for unpredictability or pretending it doesn’t exist? Hallucinations and errors are not edge cases with language models. They are built into how these systems work. Users need to know what happens when AI gives unexpected results and how they recover when the system misunderstands them.

Second, what are you asking users to trust you with, and what are they getting back? Trust operates as a value exchange. Users provide data, time, and attention. You deliver intelligence worthy of that exchange. The more data fed into an AI experience, the more relevant it becomes. But users need to believe this exchange carries value.

Third, what are you actually making, and for whom? High-risk domains like healthcare and finance require extra scrutiny. The data is sensitive, consequences of errors are serious, and users need guarantees that AI interfaces cannot yet provide.

These questions force honest evaluation. Not every task benefits from AI use, so aligning experiments with tasks that genuinely benefit from AI capabilities becomes essential. The equation balances accuracy and usefulness against learning curve, error cost, and privacy concerns.

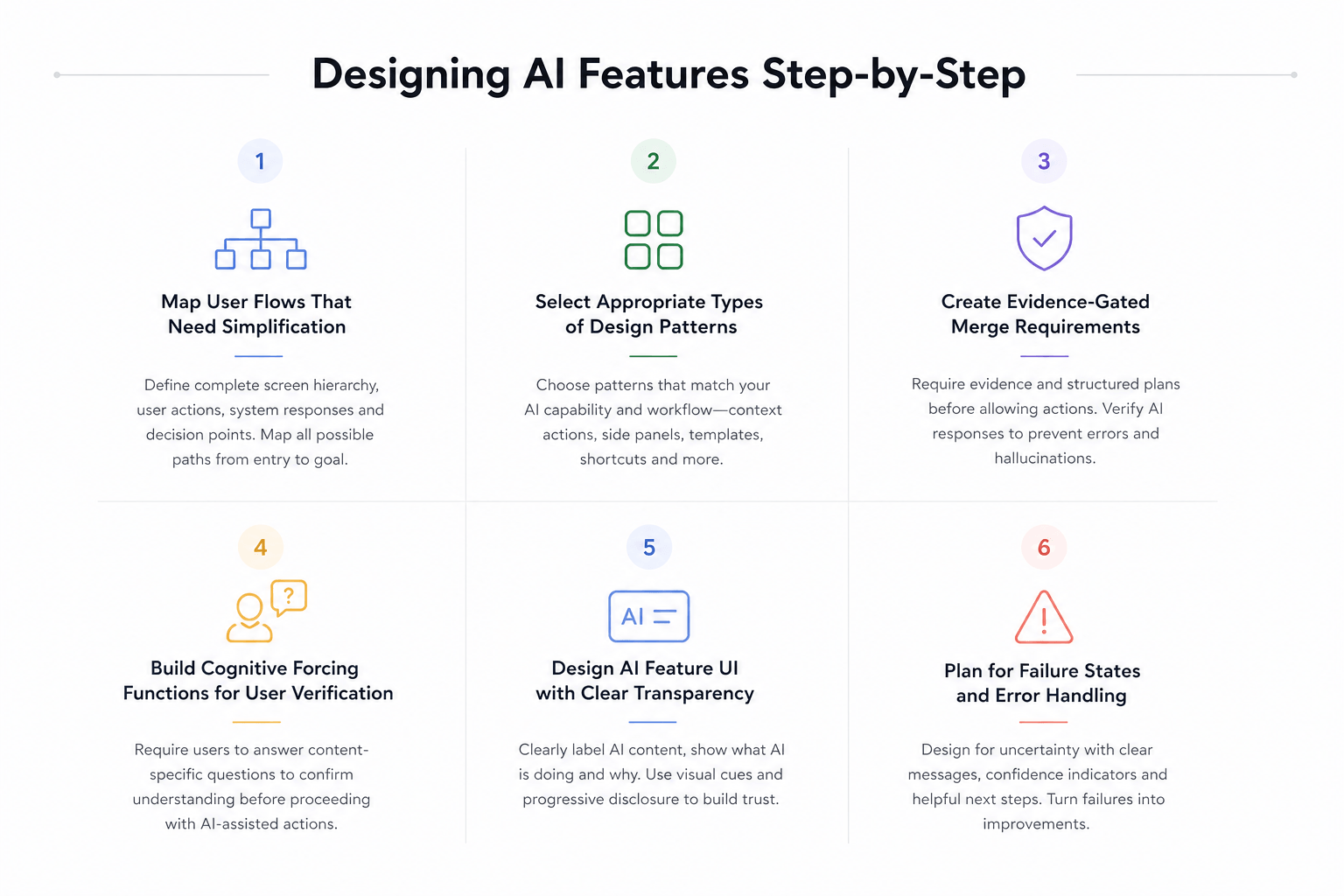

Designing AI Features Step-by-Step

Proper ai feature design requires structured implementation. The six steps below transform assessment into working features that users understand and trust.

Step 1: Map User Flows That Need Simplification

Building screens without flow structure produces isolated outputs, not navigable applications. Apps built without pre-defined navigation hierarchies have 3× higher abandonment rates and require 42% more post-launch rework. Accordingly, you need to define complete screen hierarchy, parent-child relationships, and navigation flows before generation begins.

Start by identifying entry points and end goals. List key steps including user actions, system responses, and decision points. Define branching logic for each outcome. Map all possible user interactions from each entry point, accounting for both positive and negative paths. This becomes especially critical for AI systems that require robust error handling.

Step 2: Select Appropriate Types of Design Patterns

Different AI capabilities demand different interaction patterns. Context-driven actions reduce cognitive load by presenting options precisely when users need them. Side panels support complex workflows requiring multiple steps. Quick actions and shortcuts streamline access to core features.

For content generation tasks, use wayfinders to help users construct their first prompt, example galleries to share sample generations, and templates that provide structured starting points. Inline actions let users interact with AI contextually based on existing page content. Choose patterns that match your specific AI capability and user workflow.

Step 3: Create Evidence-Gated Merge Requirements

Evidence-Gated Generation decomposes AI output into sentence-level units paired with candidate evidence items. Each unit gets evaluated using a cross-encoder NLI model that scores entailment and contradiction. Units without sufficient support get pruned before reconstruction.

Restrict agents to read-only tools until they provide structured technical plans or root cause analysis. Require trace evidence (logs and diffs) before unlocking write permissions. This verification step makes AI prove its logic before committing changes, preventing the hallucination chimney where agents debug their own bad code rather than actual system issues.

Step 4: Build Cognitive Forcing Functions for User Verification

Users must answer content-specific questions verifying they’ve processed primary information independently. This active verification approach significantly reduces overreliance compared to simple explainable AI. People frequently accept AI suggestions even when wrong, therefore cognitive forcing creates deliberate checkpoints.

Design verification that requires genuine engagement. Ask users to confirm understanding of key context before allowing AI-assisted actions. This pattern works particularly well for high-stakes decisions where blind acceptance creates risk.

Step 5: Design AI Feature UI with Clear Transparency

Specialized visual treatment consistently separates AI content from non-AI content. Use blue glow and gradient highlights for AI-generated elements. Add explicit AI labels displaying popovers that explain AI details in specific scenarios. Flag AI-generated content with descriptive text like “Summarized by AI” alongside messages encouraging users to verify the content.

Progressive disclosure reveals just enough for users to trust without overwhelming them. Show what AI is doing (visibility), why decisions are made (explainability), and allow users to influence outcomes (accountability).

Step 6: Plan for Failure States and Error Handling

Design different interaction patterns based on confidence levels. Use solid borders for high confidence, dotted borders for low confidence, and color coding (green for confident, yellow for uncertain, red for low confidence). When AI encounters uncertainty, communicate clearly: “I’m not confident about this answer. Let me ask for more details” rather than technical error messages.

Provide specific next steps instead of generic retry prompts. Include alternative paths like browsing categories when search fails or accessing human support when AI cannot help. Build feedback loops that turn mistakes into visible improvements.

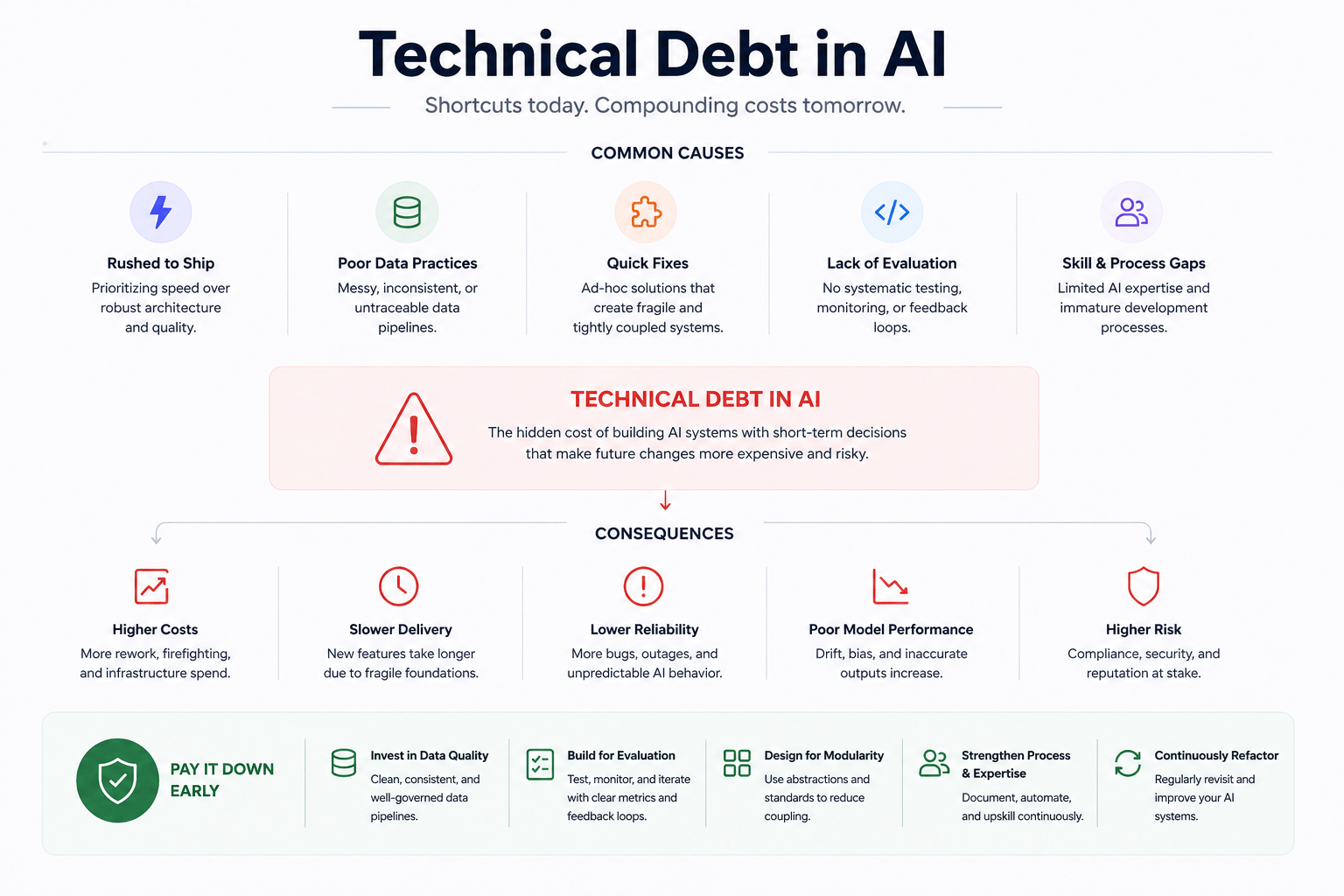

Managing Technical and Epistemic Debt

AI-generated code creates a specific type of liability that standard code reviews miss. Epistemic debt accumulates when you ship code you cannot explain, creating an illusion of competence where tests pass but understanding vanishes. Research shows unrestricted AI users suffered a 77% failure rate on maintenance tasks compared to only 39% in scaffolded groups. This collapse happens because developers outsource cognitive load required for schema formation rather than merely offloading extraneous work.

Audit AI-Generated Outputs for Overengineering

AI follows the path of least resistance, generating append-only code rather than refactoring existing structures. Copy/paste changes increase while moved code decreases, violating the rule of three and duplicating logic across the codebase. Without deep understanding of changes, developers fail to recognize when they duplicate existing patterns, creating the third variation of the same function.

AI often produces solutions suited to an ideal rather than the actual task. The code looks clean and well-formatted, lowering cognitive guard and creating overconfidence. Audit generated outputs by asking the model to justify architectural choices, then strip back to the smallest working solution. Treat AI like a junior developer that needs constraints and validation gates.

Implement Test-Driven Development for AI Code

Test-Driven Development transforms from optional practice into necessity for AI-generated code. AI thrives on clear, measurable goals, and binary tests provide the clearest targets possible. The upfront time cost disappears as AI generates boilerplate, edge cases, and test files in seconds.

Write tests that define exact behavior requirements before generation. For instance, prompt with test suites covering disabled, loading, and error states rather than vague feature requests. This gives AI concrete targets for iteration and self-correction. Advanced agents can automatically update tests when you refactor components, eliminating the brittle test maintenance that creates technical debt.

Require Architecture Reviews Before Merging

AI generates components in isolation without considering secure connections between modules. Each prompt produces a module, but nobody prompts AI to think about how those modules interact securely. Map system architecture before code review, identifying service boundaries, data flows, and integration points.

Architecture reviews catch security issues that scanners miss: broken session handling, over-permissive IAM roles, and missing authorization boundaries. Standard reviews assume human-written code, but AI-generated code requires adapted processes combining automated pattern detection with human judgment about business logic and threat modeling.

Document AI Model Versions and Dependencies

Clearly state model inputs, algorithms, and outputs. Outline dependencies on other models and explain interaction patterns. Companies typically allocate around 15% of IT budgets for tech debt remediation, making proper documentation essential for manageable maintenance costs.

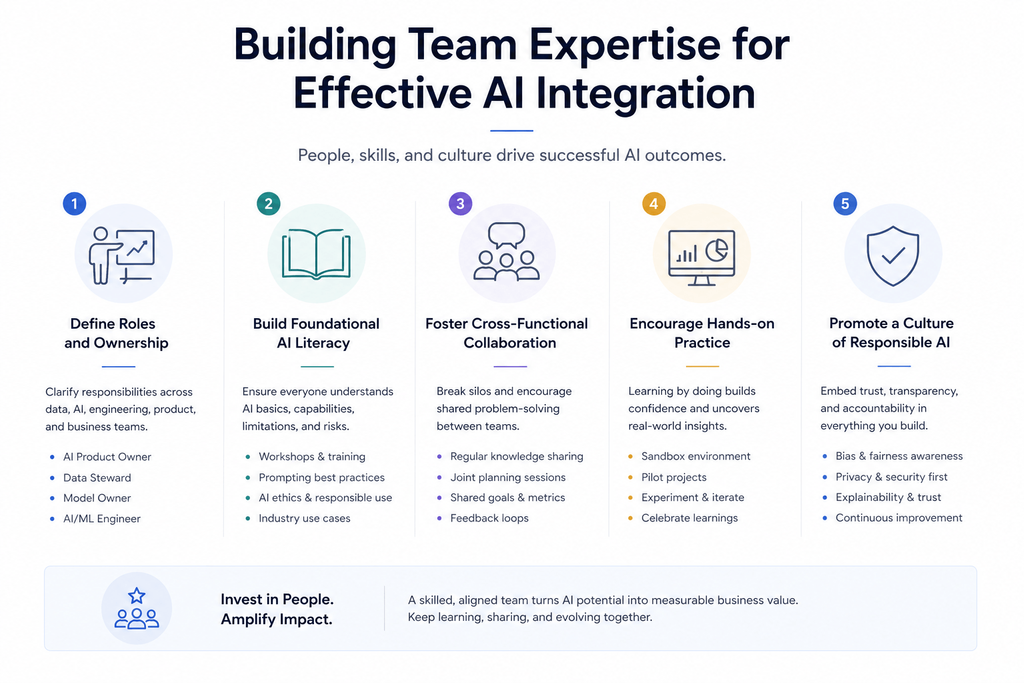

Building Team Expertise for Effective AI Integration

Executive leadership support and proper talent in key AI roles determines success for enterprises starting an AI journey. Organizations fill core AI roles through education and training of existing employees, supplemented by strategic recruitment. Bringing in experienced experts to work with employees on delivering the first use cases is recommended.

Create Centers of Excellence for AI Skills

An AI Center of Excellence serves as an organizational structure dedicated to encouraging adoption, optimization, and governance of AI across your company. It functions as a central repository for AI-related expertise, tools, standards, best practices, and insights. By consolidating AI expertise into a single entity, you ensure that when one team discovers valuable lessons, these get documented and made available to others.

The CoE organizes training programs, workshops, and internal conferences to upskill employees, fostering continuous learning. Microsoft launched their AI CoE in 2023 as a cross-functional coordination layer that sets direction and creates shared accountability for how AI work gets done. Their culture team works with AI champions across the organization to translate enterprise AI priorities into local execution. These champions act as two-way conduits, bringing real-world feedback and blockers back to the CoE while carrying guidance, standards, and learnings back to their teams.

Pair Junior Developers with AI-Experienced Mentors

AI mentoring tools analyze skills, preferences, learning styles, and professional development objectives to connect the right mentors with the right mentees. These platforms deliver personalized learning experiences where participants receive guidance, feedback, and resources tailored to their unique goals and backgrounds.

Train Teams on Model Tuning and Data Pipelines

A structured partnership between engineering teams creating algorithms and labeling teams preparing data tackles implementation challenges. Data engineers define and implement the integration of data into overall AI architecture. Solutions Architects work with external engineering teams to gain understanding of problem statements and scope, then translate client requirements internally and build out needed processes.

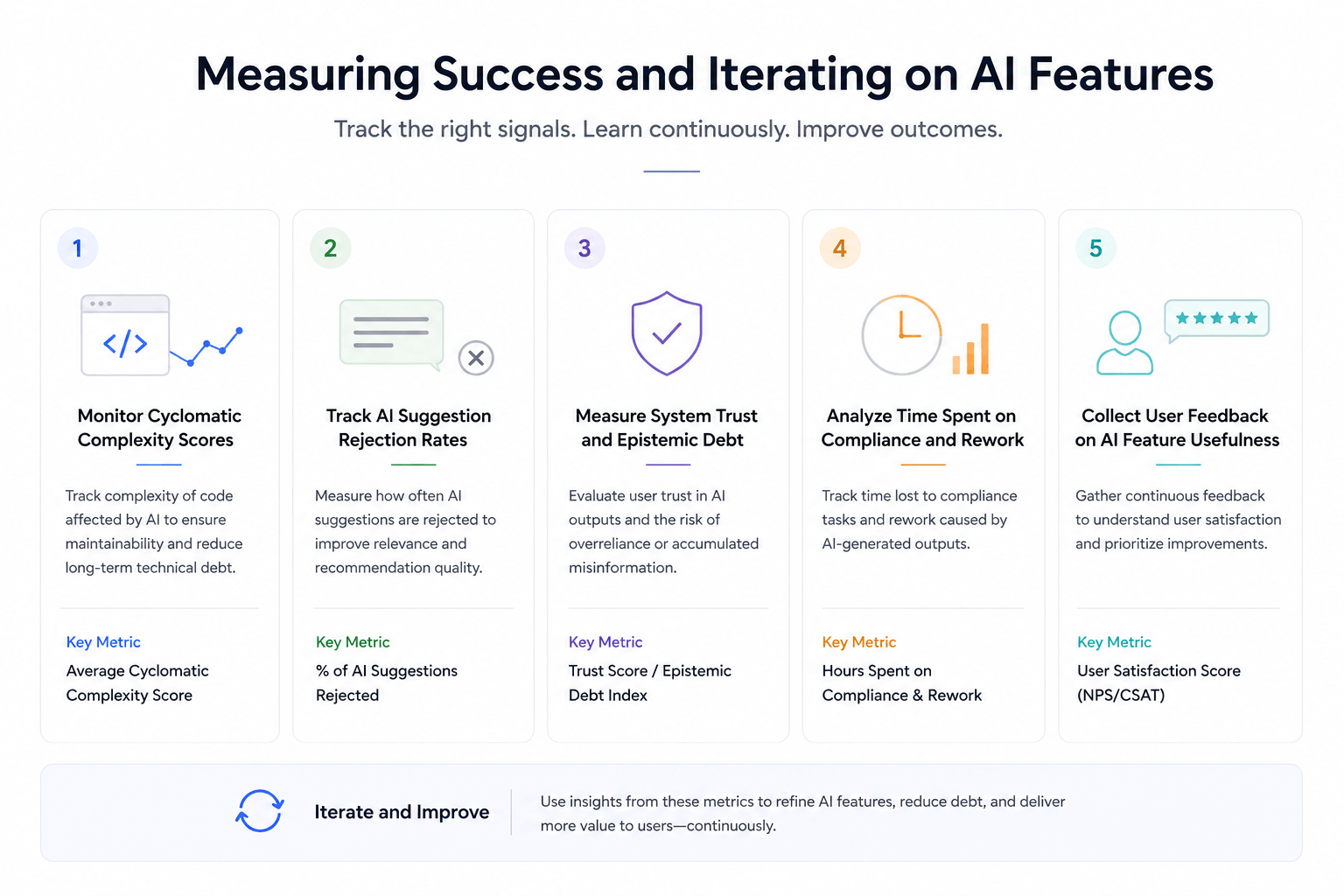

Measuring Success and Iterating on AI Features

Metrics reveal whether AI features simplify or complicate your systems. Without measurement, acceptance rates masquerade as progress while cycle times stay flat and technical debt accumulates invisibly.

Monitor Cyclomatic Complexity Scores

Cyclomatic complexity measures the number of linearly independent paths through a program’s source code. Higher scores mean more execution paths, making code harder to test. A module with high complexity requires more testing effort than one with a lower value. Calculate it as E − N + 2, where E is edges and N is nodes in the control flow graph.

Track AI Suggestion Rejection Rates

Rejection rate equals the number of rejected queries divided by total queries. Track what survives reviews, deployment, and production rather than what gets initially accepted. Teams with balanced rejection disciplines report faster debugging due to simpler code and reduced long-term maintenance burden.

Measure System Trust and Epistemic Debt

Epistemic debt occurs when you ship code without understanding it. Unrestricted AI users suffered a 77% failure rate in maintenance tasks compared to 39% in scaffolded groups. The 38-percentage-point gap isolates explanation requirements as the operative variable.

Analyze Time Spent on Compliance and Rework

Monitor how AI affects compliance workflows and rework cycles in your development pipeline.

Collect User Feedback on AI Feature Usefulness

AI automatically classifies and summarizes feedback, identifying 90-95% of related issues instantly. This reduces manual analysis time and ensures no user feedback gets overlooked.

Conclusion

You now have a complete framework for designing AI features that genuinely reduce complexity rather than add to it. Start with honest assessment, implement structured design patterns, and build verification gates that prevent epistemic debt.

Important to realize, the biggest mistake teams make is rushing to ship AI without proper evaluation and testing. Those million-dollar failures happened because organizations skipped the fundamental questions we covered.

Focus on transparency, measure what matters, and build team expertise through structured learning. Similarly, treat AI-generated outputs with the same scrutiny you would apply to any junior developer’s work.

Take these principles, apply them systematically, and your AI features will deliver actual value.

Key Takeaways

Here are the essential insights for designing AI features that simplify rather than complicate your systems:

• Start with problem validation, not AI-first thinking – Write a clear one-sentence problem statement before any technical work to avoid expensive solutions searching for problems.

• Implement evidence-gated verification – Require AI to provide structured technical plans and trace evidence before allowing write permissions to prevent hallucination-driven errors.

• Design cognitive forcing functions – Make users answer content-specific questions to verify understanding before AI-assisted actions, reducing dangerous overreliance on AI suggestions.

• Monitor epistemic debt through rejection rates – Track what survives reviews and production rather than initial acceptance rates, as unrestricted AI users show 77% failure rates on maintenance tasks.

• Build transparency through specialized UI patterns – Use visual treatments like blue glows, explicit AI labels, and confidence-based borders to clearly separate AI content from human-generated content.

The key to successful AI integration lies in treating AI like a junior developer that needs constraints, verification gates, and continuous oversight. Organizations that skip proper assessment and rush to ship AI features often face million-dollar failures, while those following structured implementation see genuine complexity reduction and user trust.

FAQs

Q1. What causes most AI projects to fail?

Poor data quality is the primary reason behind AI project failures. When AI models are trained on incomplete, disorganized, or outdated datasets, they produce incorrect or subpar outputs. Additionally, many organizations fall into the “AI-first trap” by prioritizing AI technology before clearly defining the business problem they’re trying to solve, which leads to wasted resources and systems that users don’t trust.

Q2. How can I prevent AI features from adding unnecessary complexity to my product?

Start by writing a clear one-sentence problem statement before any technical work begins. Evaluate whether simpler solutions like rules or heuristics could solve the problem adequately. Implement evidence-gated verification that requires AI to provide structured plans before taking actions, and design cognitive forcing functions that make users verify they understand key information before allowing AI-assisted decisions.

Q3. What are the key principles for designing transparent AI interfaces?

Use specialized visual treatments to clearly separate AI-generated content from human-created content, such as blue glows, gradient highlights, and explicit AI labels. Implement confidence-based visual indicators like solid borders for high confidence and dotted borders for uncertainty. Apply progressive disclosure to show users what AI is doing, why decisions are made, and allow them to influence outcomes without overwhelming them with technical details.

Q4. How should teams measure whether AI features are actually reducing complexity?

Track cyclomatic complexity scores to measure code maintainability, monitor AI suggestion rejection rates to see what survives production rather than just initial acceptance, and measure epistemic debt by analyzing failure rates on maintenance tasks. Also analyze time spent on compliance and rework, and collect direct user feedback on AI feature usefulness to ensure the features deliver genuine value.

Q5. What team structure works best for successful AI integration?

Create a Center of Excellence (CoE) that serves as a central repository for AI expertise, tools, standards, and best practices across the organization. Pair junior developers with AI-experienced mentors to build skills systematically, and ensure proper training on model tuning and data pipelines. Establish a structured partnership between engineering teams creating algorithms and teams preparing data to tackle implementation challenges effectively.